Introduction

Rendering is the process of generating an image from a 2D or 3D model by means of computer programs. It is the final stage of creating a 3D image or animation. The term “real-time rendering” refers to the ability to render graphics in real time, as opposed to offline rendering which involves pre-calculating and storing all the data needed to render an image before it is displayed. Real-time rendering is used in computer games, simulations and other applications where it is necessary to display the graphics as quickly as possible. There are many different techniques that can be used for real-time rendering, and the choice of technique depends on the requirements of the application. Some of the most popular techniques include rasterization, ray tracing, and framebuffer objects.

What is real-time rendering in 3D and how does it work?

Real-time rendering is the process of generating image frames in real time. This is typically achieved by using a graphics processing unit (GPU) to perform computational operations required for rendering, such as vertex transformations, texture mapping, and lighting calculations. The resulting images are then displayed on a screen at a rate fast enough to create the illusion of motion.

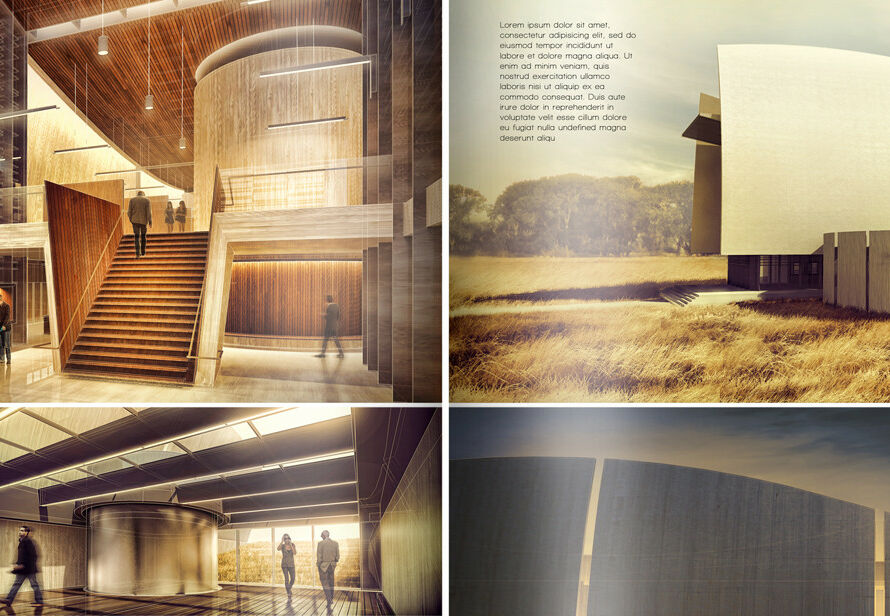

Real-time rendering is used extensively in video games and other applications that require interactive 3D graphics. It is also used for simulations and visualization purposes, such as in architectural renderings and medical imaging.

The basic idea behind real-time rendering is to generate an image frame as quickly as possible so that it can be displayed on a screen before the next frame needs to be generated. This requires the use of efficient algorithms and data structures, as well as specialized hardware acceleration devices such as GPUs.

To achieve real-time rendering, several steps must be performed within each frame interval: geometry processing, rasterization, shading, and finally outputting the final pixels to the display device. These steps are discussed in more detail below.

Geometry processing involves transforming the position of each vertex in a 3D model from object-space coordinates into world-space coordinates. This transformation allows the model to be placed in its correct position within the virtual world. Additionally, any animations or other changes to the geometry must be computed during this stage.

Rasterization is the process of converting the 3D models into 2D images. This is done by projecting the 3D geometry onto a 2D plane, which results in a set of polygons that can then be rasterized into pixels.

Shading is the process of determining the color of each pixel based on the position and orientation of the polygon it belongs to, as well as any lighting conditions that are present. This can be a very computationally expensive step, particularly if global illumination algorithms are used.

Finally, the pixels are outputted to the display device. This step may involve some post-processing effects, such as anti-aliasing, before the final image is displayed on screen.

Real-time rendering in 3D and 2D

Real-time rendering is the process of generating an image from a 3D or 2D model in real-time. It is a key technology in many interactive applications, such as video games, simulations, and training programs.

Real-time rendering can be performed using either rasterization or ray tracing. Rasterization is the most common method for real-time rendering, as it is more computationally efficient. However, ray tracing can produce more accurate results, and is therefore sometimes used for high-end applications.

No matter which method is used, there are several factors that affect the quality of the final image. These include the resolution of the image, the number of polygons in the model, and the lighting conditions.

With advances in computing power, real-time rendering has become increasingly realistic. Today, it is possible to generate images that look indistinguishable from photographs.

How does real-time rendering work?

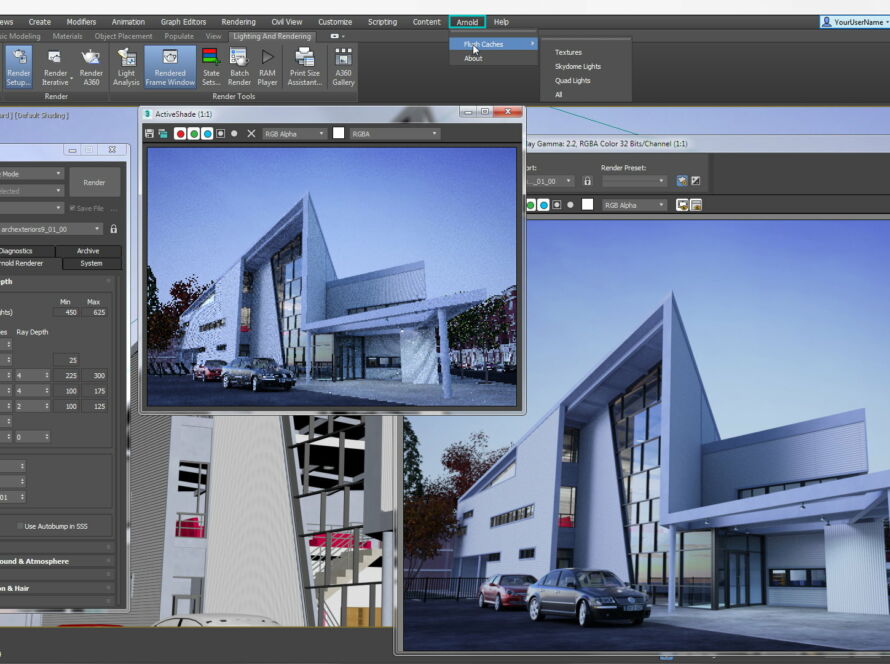

Real-time rendering is the process of generating an image from a scene description as quickly as possible. The goal is to achieve interactive frame rates (FPS) so that the user can interact with the rendered scene. This is typically done by using graphics hardware acceleration through a graphics processing unit (GPU).

To render a scene in real-time, the renderer needs to be able to quickly generate an image from a given set of input data. This data can come in various forms, such as 3D models, textures, and lighting information. The renderer will then use this data to create an image that can be displayed on a screen.

There are many different algorithms and techniques that can be used to generate an image in real-time. Some of these methods are more efficient than others and may trade off quality for speed. Ultimately, the goal is to find a balance between quality and performance so that the user has a positive experience when interacting with the rendered scene.

Conclusion

Thanks for reading! I hope this article has given you a better understanding of what real-time rendering is and how it can benefit you. Whether you’re a gamer looking for an immersive experience or a developer trying to create lifelike characters, real-time rendering is an essential tool. With its ability to generate high-quality images quickly and efficiently, real-time rendering is sure to revolutionize the way we interact with digital media.